There is long list of reasons why we are invited to do assessments, summarized in a few sentences … we have invested a ton of resources … it does not work … nobody uses it … the data is crap. Changes takes ages to complete and it feels like the project is never finished.

These statements are often true, but what is often overlooked is that these issues are usually connected. Small problems can have big effects and frustration down the line. That is why we use our framework that evaluates data capabilities across six proven dimensions, from technical quality to business impact. We explain the thinking behind the model and how it consistently surfaces blind spots that internal teams miss.

The outcome varies per customer. Some need to reshape fundamentals, while for others the changes are minimal. And sometimes we have to conclude that, given the complexity, budget and team, we couldn’t have done better a better job ourselves.

The model behind the method

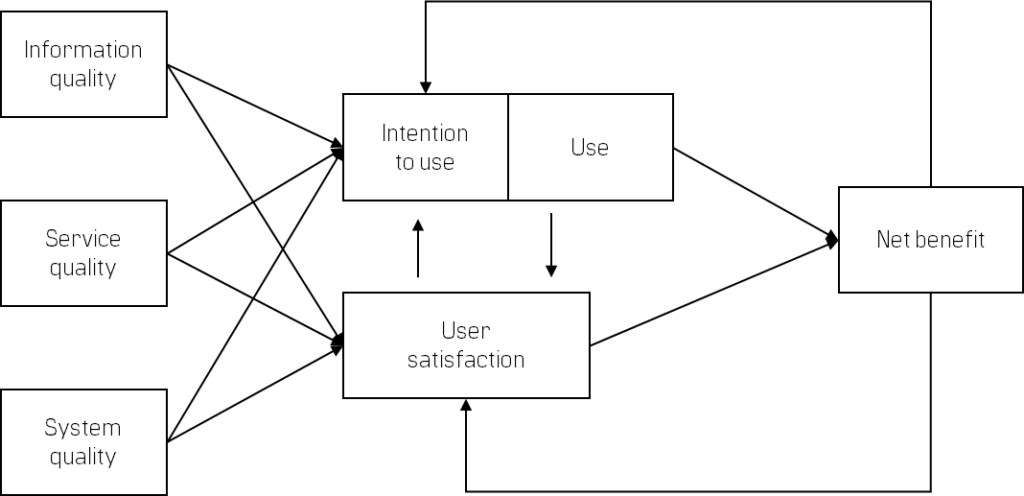

Our assessment is built on the DeLone & McLean Information Systems Success Model, a well-established academic framework that maps the chain from technical quality through user adoption to business impact. We’ve adapted it specifically for data and analytics environments across six interconnected dimensions:

Information quality examines the data itself: accuracy, completeness, timeliness, consistency, relevance and lineage. We look at how data quality is measured (if at all), who is responsible for it, and what happens when issues are detected. In many organizations, data quality is treated as a technical problem when it’s actually an organisational one and our framework reflects that distinction.

System quality covers the technical foundation: your data warehouse/lakehouse/data mesh architecture, ingestion pipelines, transformation layers, performance, reliability, security and the tools your team uses every day. We’re not just checking whether these components exist, we’re evaluating how well they work together, how resilient they are, and whether they’re fit for the demands being placed on them.

Service quality is where most of the hidden problems live. This includes how data requests flow from business stakeholders to the data team, how priorities are set, how changes are tested and deployed, how incidents are handled, and how knowledge is documented and transferred. We assess management support, grave business issues or moonshots that impact all processes. A data platform is only as good as the service organisation that surrounds it. You can have world-class technology, but if there’s no clear process for prioritising what gets built, you’ll build the wrong things.

Use and adoption looks at who actually uses the platform, how often, and how deeply. Are people consuming pre-built dashboards or doing self-service analysis? Is adoption growing or stagnating? Are certain teams or roles avoiding the platform entirely? The gap between intended use and actual use is often where the biggest opportunities hide.

User satisfaction captures how people experience the platform day to day. Do they trust the numbers? Can they find what they need without help? Are they satisfied with the support they receive? We’ve seen environments with excellent technical foundations where satisfaction is low because response times on requests are slow, documentation is poor, or users feel ignored when they raise issues.

Net benefit ties everything back to business impact: better decision-making, time saved, cost reduction, strategic alignment and risk reduction. This is where the assessment moves from diagnostic to strategic connecting platform performance to outcomes that leadership cares about.

Why 70 dimensions

Across these six areas, we’ve identified around 70 distinct assessment points. The number represents the minimum level of granularity needed to be genuinely diagnostic. Too few dimensions and you get the kind of high-level assessment that tells you what you already know. Too many and it becomes an academic exercise that takes months to complete.

At 70 dimensions, we can complete a thorough evaluation in two to four weeks while still catching the issues that generic frameworks miss.

| Information quality | Service quality | System quality | Use (intention) | User satisfaction | Net benefits |

| Accuracy | Delivery | Response times | Adoption | Usefulness | Decision-making |

| Completeness | Support | Uptime | Frequency | Ease of use | Time-saving |

| Timeliness | Governance | Ease of use | Width | Trust | Cost/revenue impact |

| Relevance | Decision flow | Scalability | Depth | Satisfaction support | Strategic alignment |

| Presentation | Backlog | Security & access | Type of usage | Pain points | Operational support |

| Structure | Timelines | Integration | Expectation | Risk reduction | |

| Consistency | Flexibility |

How the dimensions connect

Each dimension is scored individually, but the real value lies in how they connect. This is what the DeLone & McLean model makes explicit: quality drives adoption and satisfaction, which drive business impact — and when impact falls short, it feeds back into reduced trust and usage.

We use these connections to trace root causes rather than treating symptoms.

For example, one client brought us in because their dashboards had “data quality problems.” When we assessed the full chain, the data itself was fine. The issue was a process gap: no clear ownership of the ingestion pipeline, no monitoring and no defined escalation when something broke. What looked like a quality problem was a service quality problem. Fixing the process resolved the data issues within weeks.

Another common pattern: leadership invests in a modern BI platform but adoption stays flat. A system-quality-only review would find nothing wrong. Our framework surfaces the actual blockers, perhaps no training programme exists, the request backlog is months long, or the reports being built don’t answer the questions business users actually have. Those sit in service quality, user satisfaction and use dimensions that most technical reviews never touch.

What makes this different from a maturity model

Most assessment models give you a maturity rating. Our framework is diagnostic. It gives you a specific, prioritized view of what’s working, what’s broken and what to fix first. And because it’s grounded in a model that maps cause and effect, the recommendations follow logically from the findings, they’re not generic best practices bolted on after the fact.

We also recognise that not every organization needs to be at the ultimate score across every dimension. A start-up has different requirements than a multinational FMCG company. The right target state depends on your strategy, your resources and the maturity of the business you’re serving. Our recommendations reflect that reality.

The deliverable

First, a clear breakdown across all 70 dimensions, scored and colour-coded by severity and relevance. This gives you an honest, comprehensive picture of where you stand — often for the first time. We regularly hear that the assessment surfaced issues teams were vaguely aware of but had never seen articulated clearly, with the cause-and-effect between domains mapped out.

Second, a prioritized list of improvements ranked by impact and effort. Not everything needs fixing at once. We help you distinguish between quick wins that build momentum and structural changes that take longer but unlock the most value.

Third, a practical roadmap co-created with your data team. We involve your team throughout the process, not just as interviewees but as collaborators in shaping what comes next. The goal is a shared understanding and a plan that people are committed to delivering, not a report that sits on a shelf.

Who it’s for

The assessment delivers the most value when there’s a sense that things aren’t working as well as they should, when adoption is lower than expected, when delivery has slowed down, or when there’s a disconnect between what the data team builds and what the business actually needs.

Back to all articles